Are spam filters damaging your cash flow?

One worrying trend we’ve noticed in recent months is the increasing likelihood that our customers’ spam filters catch our monthly invoices, either sending them to the oft-ignored spam folder, or rejecting them outright.

Needless to say this is concerning because our customers either won’t know their credit card is being charged (if they’re on our auto-bill system) or simply won’t know that payment is due; risking suspension of their account.

Intuitively it makes sense that spam filters would attach a high spam score to invoices & payment requests, as these sorts of documents very often feature in spam and phishing attempts.

So, what to do about it?

Our experiments revealed that nearly every major spam filtering system is substantially less likely to classify an email as spam if it originates from a well known, reputable mail service such as GMail, Yahoo Mail, Hotmail, etc. The identity of the originator is determined by IP address rather than the unreliable From: header.

Bear in mind that your web server either has no reputation value at all or – worse – has an IP address that was previously leased to less scrupulous operators. As availability of IP addresses becomes tighter you can certainly expect that the IPs attached to your shiny new server have been used by numerous websites & servers before reaching you.

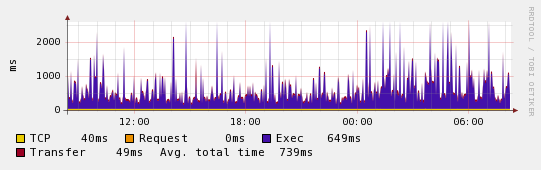

We’ve experienced this situation a number of times – as we maintain a large number of servers in physically disparate locations (hence on different networks) and need to ensure email alerts can be delivered from all of them.

Google to the rescue!

At this point a possible solution becomes clear – route important email via a well known and reputable service to improve its chances of successful delivery.

We’ve been trialling this by utilizing the SMTP relay service provided by Google Apps Premier Edition – which powers all email destined for the wormly.com domain. It’s dramatically improved the situation for us thus far, and provides the additional benefit of archiving all web server outbound email within GMail.

To assist if you’d like to try something like this, I’ve posted a howto for configuring Postfix to relay via GMail’s SMTP service.